How We Built VAD Digital Sets for Ahsoka Using Unreal Engine | Breakdown

Hi, this is an article covering the art direction process used to build virtual locations for in-Camera VFX on Lucasfilm’s Ahsoka. I'll break down everything from the initial concept stages right through to the final, camera-ready (final-pixel) sets - share some stories along the way. Lets go.

At a Glance:

Schedule

Concept Art and Design: 8 weeks

VAD Construction: 9 months

Volume Integration and Testing: 4 months

Resources

Number of Real-time VAD Artists: 14

Number of Engineers: 3

Deliverables

Hero Location Sets With Multiple Variants: 17

Set Variants: 65+

Set Dressing Assets photogrammetry: 30+

Lighting Scenarios: 85+

VScout Storyboard-Frames: 300+

Partner Reviews

Virtual Stage Walks With Production Designer: 190+

Prelight & Camera Blocking Sessions With DP: 65+

Hosted Creative Reviews (VScouts): 42+

So, What Exactly Were We Tasked to Do?

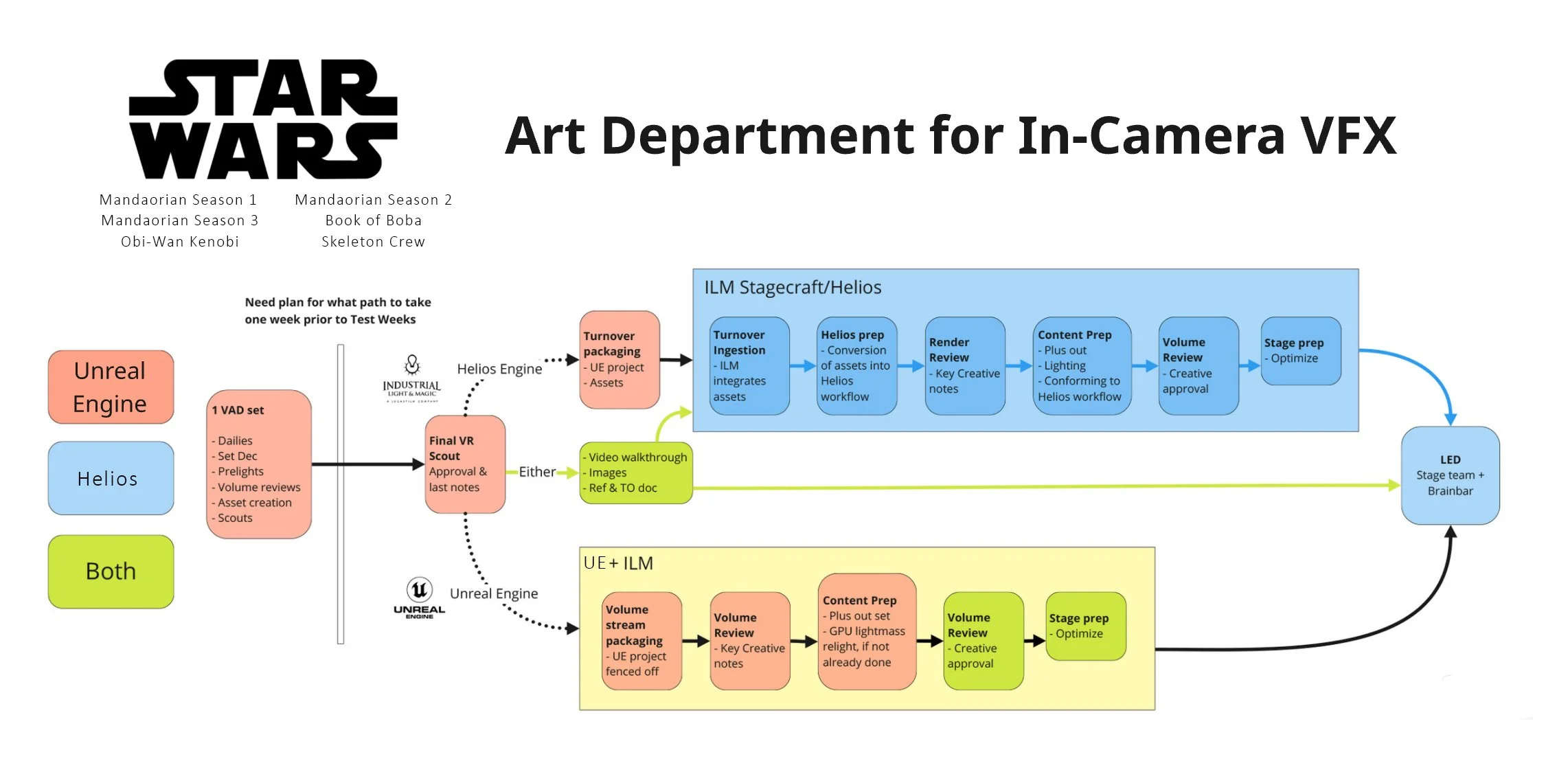

Image shows the workflow of Key Creatives, VAD, and ICVFX teams

We were tasked with building virtual sets in Unreal Engine that looked ready for the final film, right from pre-production. Basically, less "fix it in post" and more "get it right now." That meant totally rethinking how our team was set up and what skills we had onboard — shifting from a predominantly design team with some final pixel, to a team mostly composed of final pixel members.

There were actually three distinct virtual production workflows happening on this project. First, there was Narwhal Studios — the process my partner and I designed, managed, and built teams for together. Then there was ILM Stagecraft, which operated using the Helios platform. Finally, there was a straight Unreal Engine workflow where our environments went directly onto the stage.

The Narwhal Studios workflow involved daily reviews of the environments with the production designer. We collaborated closely with set decorators, conducted pre-lights with cinematographers, and ran weekly virtual scouts to review sets together — designing scenes and planning shots in detail. We optimized assets based on what the camera would actually see, which was a huge efficiency boost.

Once approved, these virtual sets went through a final VR scout for validation. From there, they were bundled into a turnover package and delivered to ILM's Stagecraft team. Helios took these Unreal Engine projects and integrated them into ILM's internal system — a process that required careful asset conversion we supported throughout, thorough creative reviews, and additional content preparation to ensure everything matched exactly what we'd developed in pre. After that optimization, the sets were ready to go to shoot.

For the direct Unreal Engine workflow, we streamlined the process even further. We packaged scenes into individual volume streams out of Perforce (for security reasons), conducted a quick stage review, relit scenes based on cinematographer feedback, and optimized immediately. Often this step required minimal adjustments.

Finally, we delivered comprehensive packages directly to the Brainbar team, complete with video walkthroughs, images, and detailed references — making sure everyone knew exactly what we'd designed in pre-production.

How Did Things Kick Off?

Image shows process of either Helios or Unreal Engine for ICVFX

It all started with meetings between production designer Andrew Jones and myself to review reference and concept art. At this stage, we incorporated set designers' 3D models, VFX assets, and various asset libraries into a unified Perforce database, configuring server permissions to ensure secure access for each department based on the show's requirements.

These initial block-outs, created using simple shapes, were crucial as they laid the groundwork for everything that followed. Although this step might seem basic, it was essential for setting the foundation of the environments and ensuring a cohesive vision moving forward.

What Steps Did We Take to Develop Assets and Set Decor?

Image shows comparison between the Maya layout and the Unreal Engine lit version

The development of a set goes through several stages to help creatives stay on time and on schedule. I take sets from block-out through previs, then into look-development, and finally to the finished version.

The final version is carefully vetted, tested on stage, and optimized — resulting in an Unreal Engine file for each scene that includes multiple lighting scenarios. From there, the sets are handed off to the stage teams, who save them ready for the shoot and any last-minute tweaks.

How Did We review the virtual sets (digital environments)?

Image showing the virtual location scout of the key creatives on Ahsoka

On The Mandalorian Season 1, we used Virtual Location Scouts. For Ahsoka, I helped introduce remotely driven, multi-user Virtual Location Scouts, which were crucial for keeping everyone aligned.

I hosted regular sessions with directors, production designers, DPs, storyboard artists, writers, and producers to review locations in real-time, place cameras, and create still frames. These frames guided focus and were utilized across all departments, including previs, VAD, VFX, and physical construction.

Ahsoka Key Creative Team:

Andrew Jones - Production Designer

Doug Chiang - Production Designer

Jon Favreau - Creator/Executive Producer/Writer

Dave Filoni - Creator/Executive Producer/Director/Writer

Eric Steelberg - Director of Photography

Q Tran - Director of Photography

Rachel - Art Director

Clint Spillers - VP Producer

What were the results from a virtual location scout?

Image showing the a collection of screenshots from scouted cameras being displayed in Unreal Engine during a virtual location scout with key creatives

These multi-user virtual location scouts lasted 1 to 2.5 hours and produced 30 to 80 usable frames per session, which were immediately shared with key production members.

I also provided remote access for creatives to explore environments from their homes whenever they wanted. Facilitating cloud-based meetings allowed them to collaborate on a virtual location and work on their story together, ensuring all decision-makers remained aligned.

Where Did Photogrammetry Fit In Pre-production?

Image showing a miniature created by Jason Mahakian, it was scanned and brought into Unreal Engine

Photogrammetry of life-size props, miniatures, and set decorations allowed the art department to drive the look and marry the physical and virtual worlds. These scanned assets go through a cleanup process where textures are flattened and low, medium, and high resolution versions are created.

Whenever we scanned a miniature, I collaborated closely with model makers to scale them into life-size sets, using them as foundations for block-outs. The Seatos Stonehenge, shown above, is a good example of this.

Out of the 17 hero locations, 7 utilized photogrammetry captured with the art department.

what Was Our Approach to Lighting and Rendering?

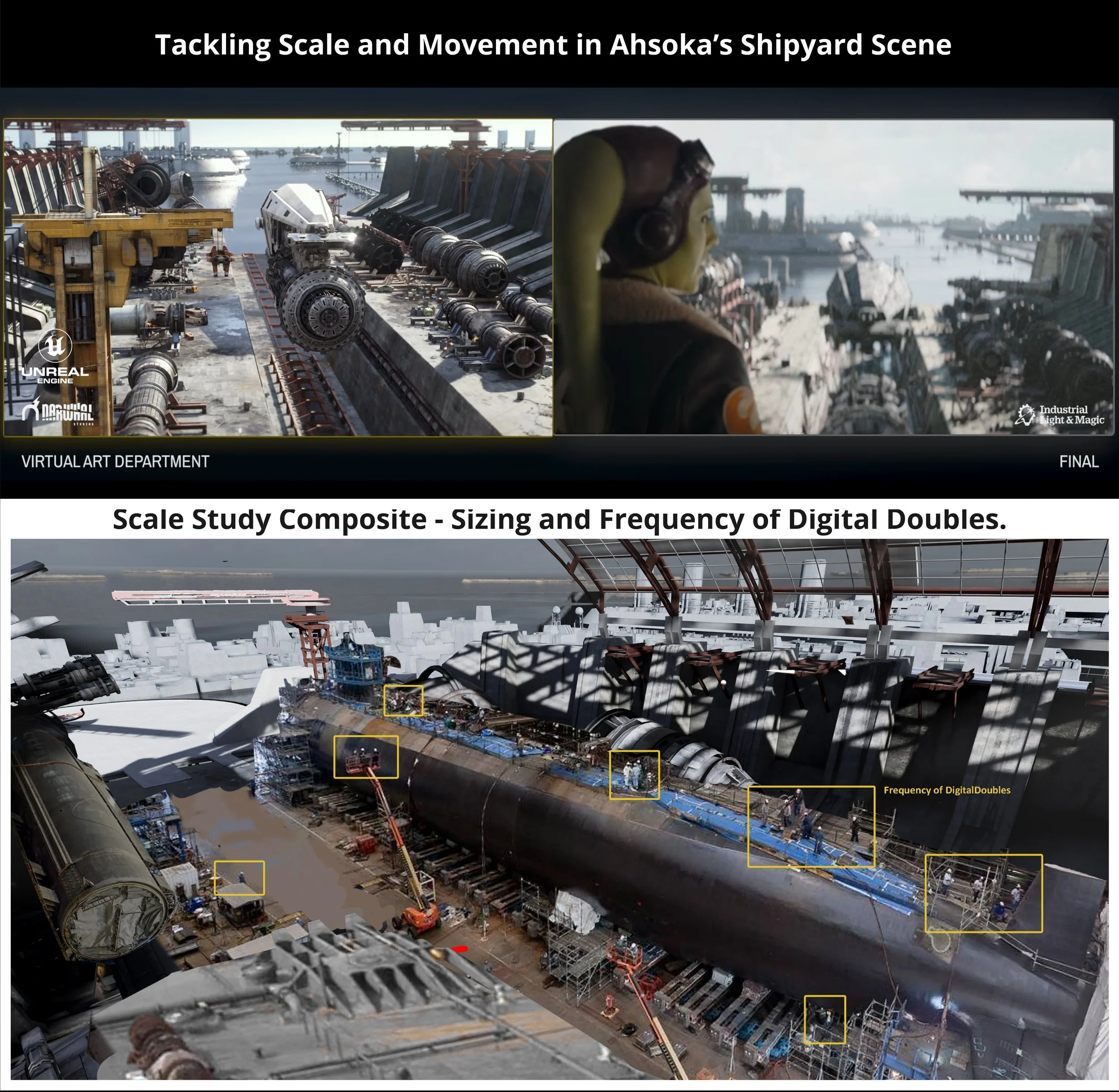

Comparison showing VAD environment (left) and the final shot environment (right)

My team started by understanding the action and mood of each scene, pulling references to help guide the visual direction. We began lighting with the major shapes in place, using broad strokes to establish key angles and camera placements. As the scene developed, we focused on sculpting the light to highlight areas of interest, adjusting color and intensity as textures and materials came into play. Once the visual language started to click, we fine-tuned light placement and camera settings to reach the desired complexity and impact.

When the lighting was approved through the lens on stage, we handed it off to the stage team. Before that, we ran stage tests and incorporated feedback to make sure everything was dialed in and production-ready.

Why Is Turnover and Techvis So Important?

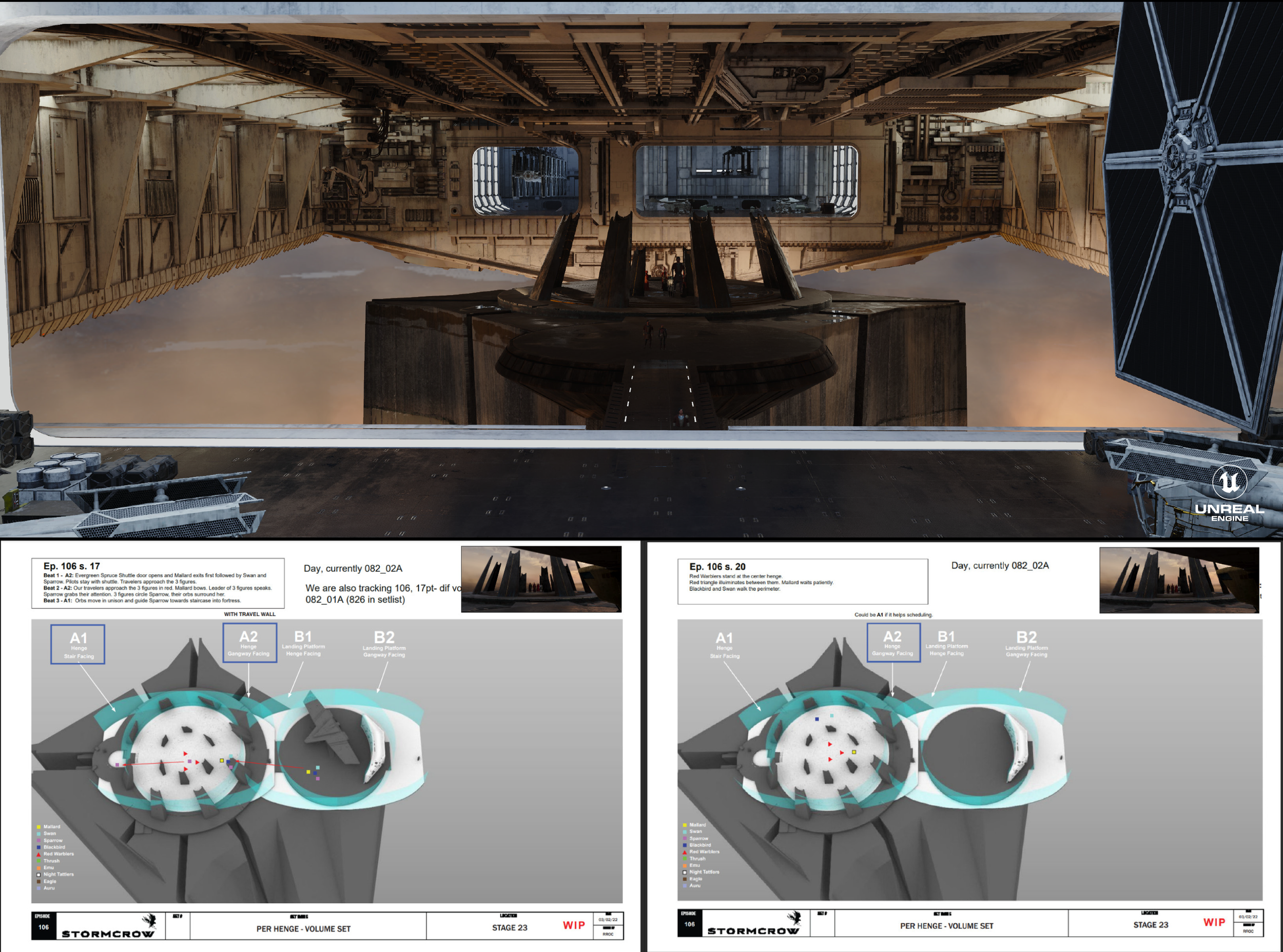

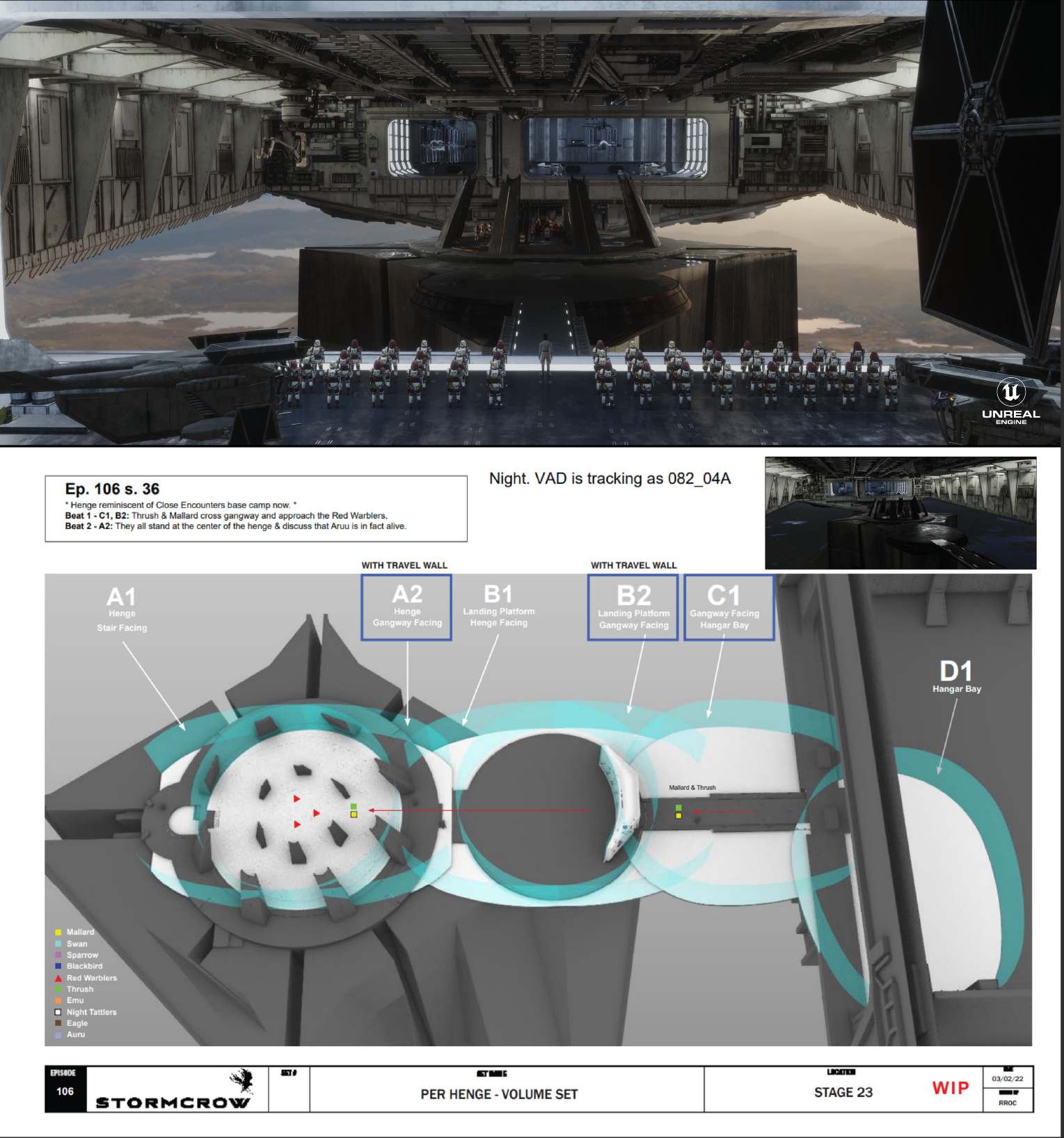

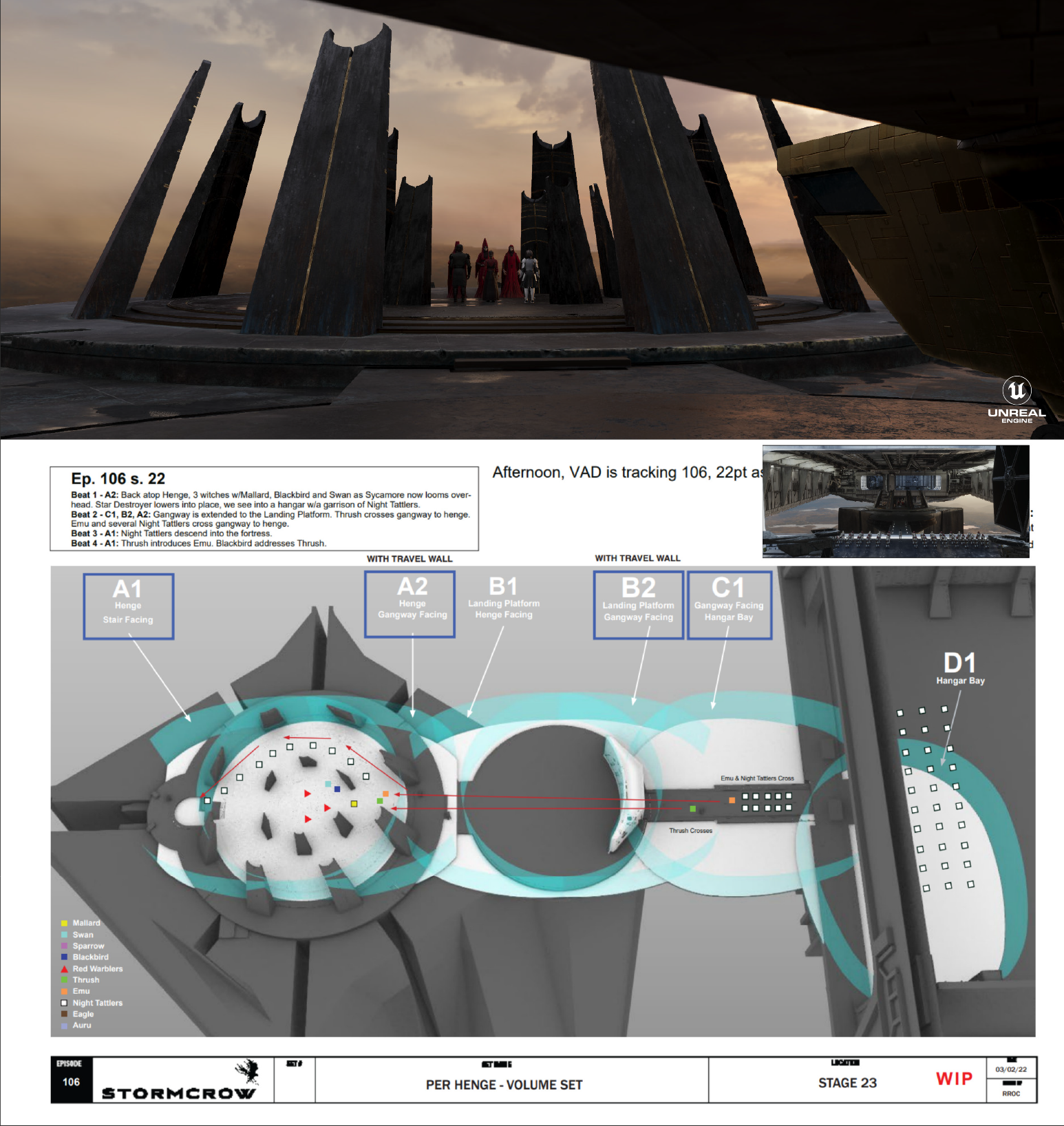

The turnover process was a very important part of planning the shoot, involving Techvis developed in close collaboration with the art department, LED stage, production, and VFX teams.

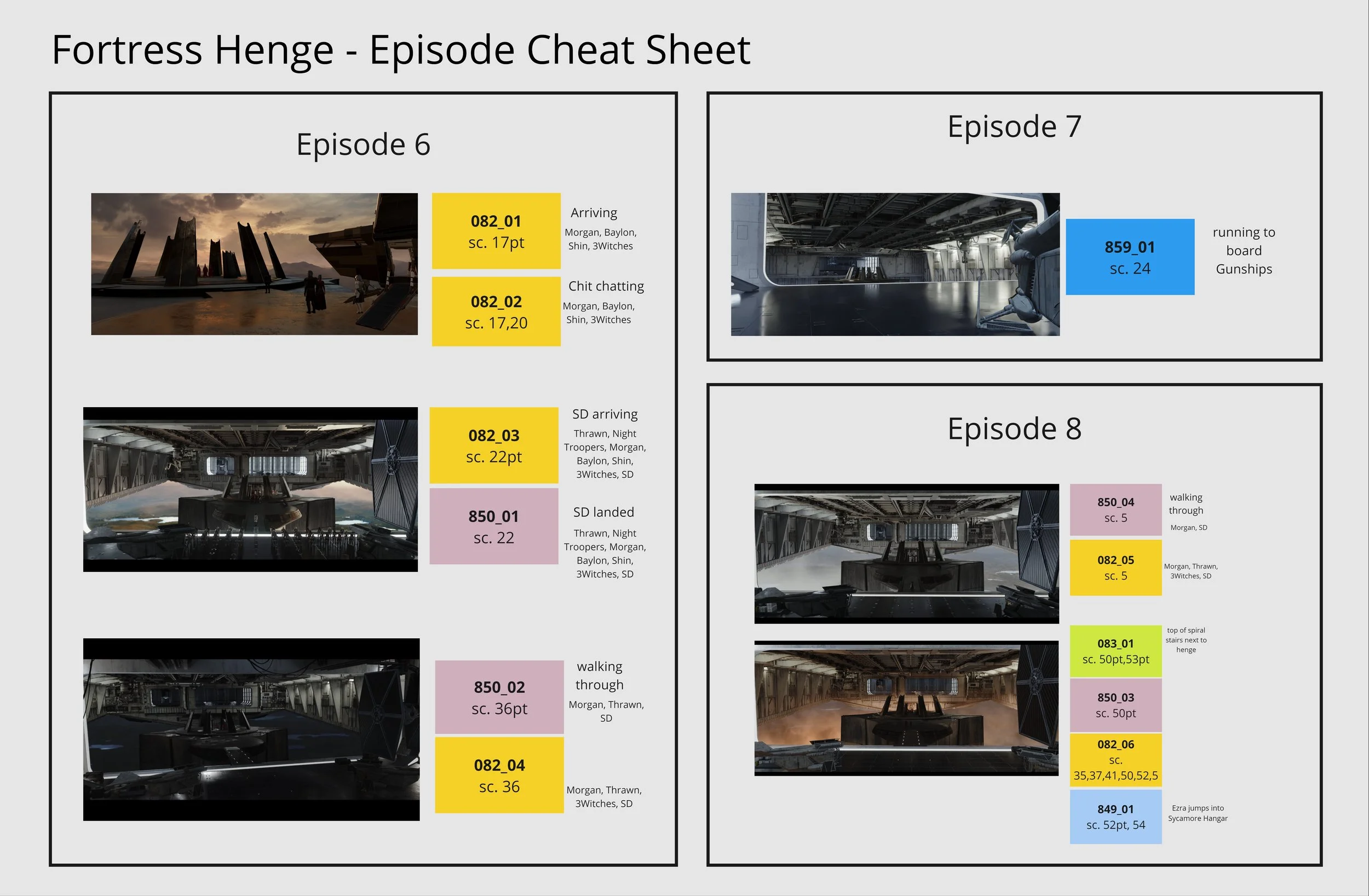

For the Per Henge Volume Set, various lighting scenarios and volume placements were used to accommodate different scenes.

Each set variant, such as night, late morning, and late afternoon, requires specific adjustments like set decoration, and lighting. These elements are planned and coordinated before filming to ensure a seamless production process. The location of each volume in the Unreal Engine scene labeled A1, A2, B1, B2, etc., dictates the content displayed on the screens. While some lighting scenarios and set decorations are consistent across scenes, others require specific alterations to match the story beats.

Regular LED-stage testing was conducted with the director of photography and other key creatives to ensure the virtual sets appeared realistic and met the creative vision. Feedback from these tests was used to refine the environments and passed off to the ILM team.

The turnover process included detailed documentation, including techvis, volume placements, virtual scout cameras, set decoration, reference and concept art. This method mirrors the coordination that typically takes place by physical construction teams in the art department, adapted to the virtual environment.

How Did We Handle Multiple Sets at Once?

Image showing the Shotgrid project management tasks and schedule on Ahsoka for the VAD

Each environment for Ahsoka had unique challenges and required a tailored approach to effectively tell the story. To manage this, I organized pods with an art director, team lead, set owners, and VAD and PHG artists, running up to four sets at once.

Set owners worked with generalist VAD artists to finalize designs, guided by myself and the VAD Lead, who prioritized tasks based on feedback from the VP Producer and Production Designer. This process focused on reviewing still frames from weekly virtual location scouts to address the most critical elements.

All of this was managed in Shotgrid, which we heavily customized to suit the virtual art department workflow. I programmed specific functionalities to easily adapt to daily sprint plans that would drive regular changes.

Daily sprint planning ensured our focus stayed on the most crucial tasks. Updates were then communicated through Shotgrid and Mattermost — a secure, server-hosted chat platform that kept everything off the internet.

Any Interesting Challenges?

The Peridea Henge set had six different Unreal lighting setups to match the script's needs, plus five unique scenes with different dressing and moods for the stage team.

The Shipyard in Ahsoka was huge — a long corridor built up from blockouts with submarine factory vibes. Working closely with Andrew Jones and Eric Steelberg, I used techvis to test shots and make sure everything fit.

The Noti Reveal was originally a misty landscape but got reworked to better fit the final story.